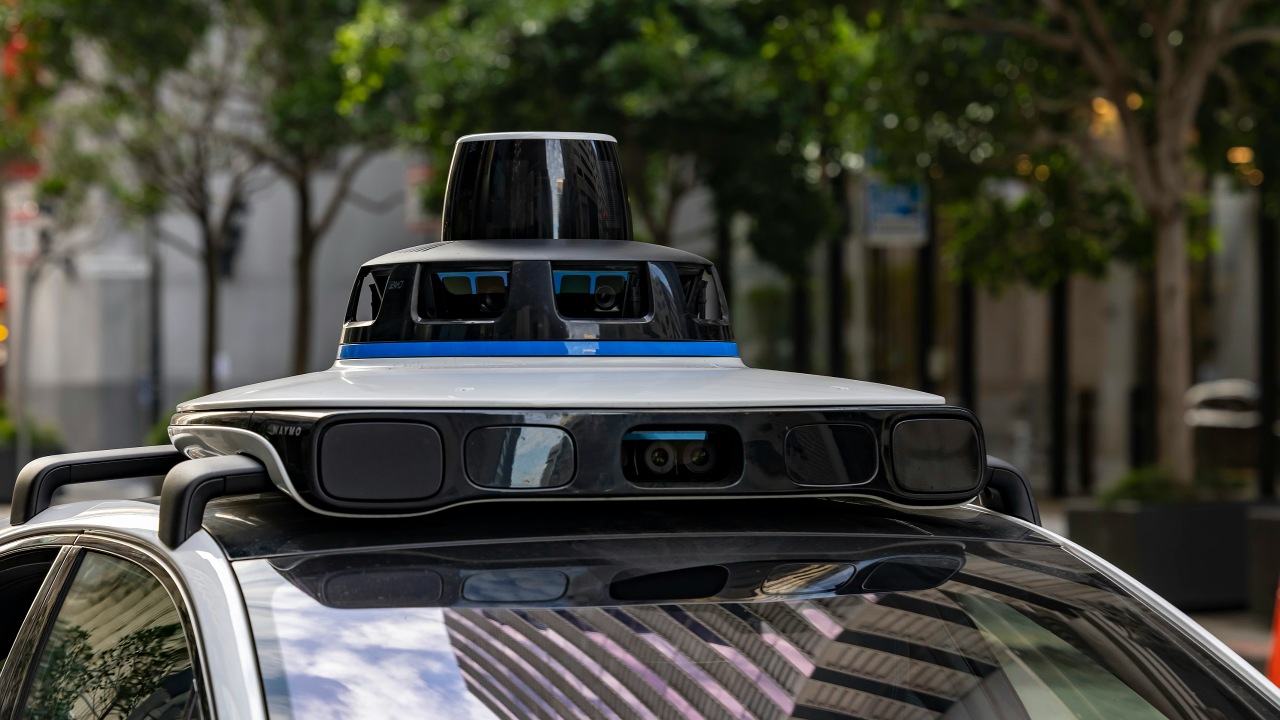

Waymo’s driverless robotaxis are facing their most serious safety reckoning yet after a string of incidents in Texas involving stopped school buses. The company is now recalling and updating its autonomous driving software, a move that underscores how quickly public trust can erode when children’s safety is in question. Regulators, school districts, and parents are watching closely to see whether this fix is a turning point for the technology or a warning sign that it is not ready for everyday streets.

The recall centers on how Waymo vehicles interpreted school bus stop signals and the rules that require drivers to halt when children are boarding or getting off. After at least 20 citations tied to passing stopped buses in Austin, the company is being forced to confront the gap between its promise of safer roads and the reality of how its software behaved around some of the most vulnerable people on those roads.

How school bus incidents pushed Waymo to act

The immediate trigger for Waymo’s software recall was a pattern of violations around school buses in Austin, where its driverless taxis operate without a human behind the wheel. Local reporting describes at least 20 citations issued since Aug for incidents in which Waymo vehicles passed stopped school buses, a level of repetition that turned what might have been dismissed as edge cases into a clear systemic problem. Those citations, combined with video evidence and complaints from drivers, made it difficult for the company to argue that its system fully understood or respected school bus safety rules.

Pressure intensified when Austin ISD formally asked Waymo to halt operations after the 20th violation, a request the company initially refused. That standoff highlighted a core tension in autonomous deployment: school districts are responsible for protecting students at the curb, while companies like Waymo control the software that decides how a robotaxi behaves in the same space. The recall is Waymo’s acknowledgment that its vehicles were not consistently stopping when buses extended their stop arms and activated flashing lights, behavior that state law treats as non‑negotiable around children.

What the recall actually changes in Waymo’s software

Waymo is framing the move as a voluntary software recall, but the stakes are closer to a safety-critical patch than a routine update. The company has said it will push new code to its autonomous fleet to improve how vehicles detect and respond to stopped school buses, particularly when buses are partially obstructed or when the stop arm and lights are visible only briefly. In practice, that means the system should default to more conservative behavior, slowing earlier and stopping more reliably when there is any indication that children may be boarding or exiting.

The recall also appears to address how the software interprets complex, real-world bus scenarios that do not look like textbook diagrams. Reports describe “close calls” in Texas where Waymo vehicles moved past buses that had just opened their doors or had not yet fully retracted their stop arms, suggesting the system was tuned too tightly to specific visual cues or timing windows. By broadening the conditions that trigger a full stop and extending the time the vehicle waits before proceeding, Waymo is trying to close the gap between legal expectations for human drivers and the split-second judgments its code was making on busy streets.

Austin as a stress test for driverless safety

Austin has become an unexpected proving ground for how autonomous vehicles handle school traffic, in part because of how visible Waymo’s operations are there. Its autonomous taxis, seen on East Oltorf Street and other major corridors, share the road with Austin ISD buses during peak pickup and drop-off hours. That overlap created a dense set of interactions between robotaxis and school buses in a relatively short period, which is how the pattern of 20 citations since Aug emerged so quickly.

The city’s experience shows how local context can expose weaknesses in a system that might appear robust in testing. Austin’s mix of narrow neighborhood streets, busy arterials, and frequent bus stops forces any driver, human or machine, to make constant judgment calls about when to yield and when to proceed. When Waymo vehicles repeatedly failed to stop for buses that were loading or unloading students, it was not just a technical glitch, it was a sign that the company’s training data and safety assumptions had not fully captured the realities of a large urban school district’s daily rhythms.

Why regulators and parents are alarmed

School bus laws exist because children are unpredictable, and regulators treat violations around buses as among the most serious traffic offenses. In that context, 20 citations tied to a single company’s vehicles in one city is not a small number, it is a flashing warning light. Parents and school officials in Austin have reason to question whether a system that struggled with such a basic safety rule can be trusted with more complex decisions, such as navigating crowded school zones or reacting to a child who suddenly steps into the street.

The fact that Waymo vehicles remained on the road in Texas even as the recall was being prepared has also raised eyebrows. Company representatives have emphasized that the software update can be deployed over the air and that additional monitoring is in place, but for families watching robotaxis roll past their children’s bus stops, that reassurance may feel abstract. The gap between technical explanations and lived experience is where public trust is won or lost, and in this case the images of driverless cars moving past stopped buses are likely to linger longer than any corporate statement.

What this means for the future of driverless oversight

The Waymo recall is a reminder that autonomous driving is not a binary leap from unsafe human drivers to flawless machines, but a long, messy process of finding and fixing failure modes that only appear at scale. Passing a stopped school bus is not an exotic edge case, it is a core rule of the road, and the fact that a leading company had to retrofit its software to handle it correctly will fuel arguments for tighter oversight. Regulators are likely to look more closely at how companies validate their systems against specific, high-stakes scenarios like school bus stops, rather than relying on broad safety metrics or aggregate crash data.

For Waymo, the challenge now is to show that the recall is more than a narrow patch for one city’s problem. The same logic that led to violations in Austin could surface wherever its vehicles encounter school buses, whether in Texas or other states with similar laws. I see this episode as a turning point in how the public and policymakers judge driverless technology: not by its most impressive demonstrations, but by how it behaves in the mundane, unforgiving moments that define everyday safety. If autonomous vehicles cannot be trusted to treat every stopped school bus as a hard red line, their broader promise will remain out of reach.